Five years ago, I had the opportunity to participate in the 5 Things I Wish I’d Known before My First PI Planning video series for piplanning.io. Now, I’m reflecting on those tips and sharing them in this blog.

My journey with SAFe® started with SAFe 2.5. Since then, I’ve enjoyed coaching and mentoring other coaches and leaders.

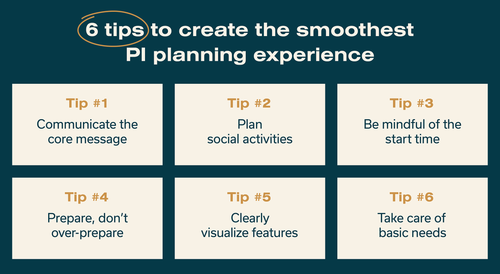

As an RTE, I’ve had the chance to facilitate PI planning and coach others to take over that role. While facilitating and coaching, I identified six key tips that created the smoothest PI planning experience for everyone.

Here they are:

- Communicate the core message

- Plan social activities

- Be mindful of the start time

- Prepare, don’t over-prepare

- Cleary visualize features

- Take care of basic needs

PI Planning Tip 1: Communicate the Core Message

In the standard agenda, the first half-day of PI planning focuses on the vision, desired deliverables for the PI, features, and architecture.

As the RTE, it’s important to check that the messages from management, including executives, are inspiring and motivational. These speeches should reflect on achievements from the previous PI, address the current state, and provide insight into the organization’s future, specifically how the organization will contribute to building that future in the next PI.

It’s helpful to review and rehearse the message while supporting effective storytelling. Think of a traditional story arc. Assign you and your customers to a specific role and place in the story. Are you the hero or is the customer the hero while you’re the fairy godmother? What obstacles have you helped your customers overcome? What happy endings have you or your customers created? Sequencing your presentations in this way (if only loosely) will make it easier for your audience to connect with your organization’s purpose.

One important ingredient of effective storytelling is an executive who knows the customer and the business. They can create a truly captivating story based on real experience in the field. Put yourself in your customer’s shoes and share the story or problem you’re trying to solve from their perspective. This will capture the audience and show your people exactly what they helped create for your customer or will create in the upcoming PI.

In summary, follow these pointers to create a core message that resonates with teams.

- Do a rehearsal beforehand to ensure smooth transitions between segments

- Support storytelling from one presentation to the next

- Share customer success stories or examples from the field

- Invite executives who know the customer and business to present and tell the story

PI Planning Tip 2: Plan Social Activities

Between days one and two, organize an activity like a fox trail or a bowling night. This unstructured time allows people to connect, converse, and build relationships.

However, it’s important to remember that there is still a second day of PI planning. Do not party too long!

Social activity ideas:

- Bowling night

- Fox trail

- Escape room

- Team dinners featuring local and international foods

If you don’t want to use outside work hours for social time, you can add icebreaker activities to the PI planning agenda. These are short, no more than 10-minute activities that allow people to learn about each other in a different context than work. However, it’s important to note that icebreaker activities don’t work in all cultural contexts, so use discretion when deciding whether or not to include them.

Here are some quick icebreaker activities:

- Chat surveys or questions: Use the survey tool that comes with online meeting applications like Google or Zoom to poll the group on things like their favorite candy

- Breakout groups/partners to answer a question or share favorites

- Rapidfire, round robbin question and answer (better in smaller groups): Ask the group a series of “This or that” questions (for example, horror or mystery?)

- Get to know you Bingo: Give everyone a card with different traits listed on it, like “Owns a dog” or “Has lived abroad;” during breaks, fill out your card with people who have those traits until someone shouts “Bingo” when they get five boxes checked in a row on their board

You can even get creative and pick an activity that matches your PI planning theme.

Because virtual PI planning is here to stay, we need to get creative with social activities you can do from afar. Plan time for structured exchanges and organize remote socials.

During Covid, we had “blind dates” during lunch for those who did not want to eat alone. Participants were assigned to another teammate to dine with. This meant they socialized via video call during their lunch from the comfort of their own kitchens.

Human interaction is important, and PI planning is a great opportunity to get everyone together, even virtually, to create relationships that make collaboration a seamless and enjoyable experience.

PI Planning Tip 3: Be Mindful of Start Time

During the first half day of PI planning, a lot of conversations take place. My SAFe experience is primarily in Europe, particularly Switzerland and Austria, where many people take public transportation to work. Therefore, it’s important to be mindful of the start time. Beginning at 8:00 in the morning might not be suitable as people often travel by train or bus. Starting around 9:00 A.M. allows for a more feasible and effective schedule.

Additionally, it’s common for fatigue to set in during the first half day, potentially due to cultural factors. Different cultures practice different presentation methods. This means some cultures are more tolerant of longer presentations than others.

To maintain high energy levels, it’s beneficial to keep some talks shorter and initiate breakouts earlier than the proposed agenda.

My remote agenda is different than for on-site PI planning. In remote settings, you’ll need more breaks than you have for on-site PI planning. Plan these breaks. Ask your Scrum Masters/Team Coaches to insist on these breaks. I have 15-minute breaks on my agenda every 60 – 75 minutes. During these breaks, I ask people to stand up and leave their desks to walk around, drink water, and do something physical. Remind your Scrum Masters/Team Coaches to do the same in the breakouts.

PI Planning Tip 4: Prepare, Don’t Over-Prepare

When preparing for PI planning, there are two approaches: A) Going in without stories and focusing on known features, or B) Going in with all the stories.

From my European experience, starting with fewer prepared stories yields better results.

Over-preparing with excessive detail can lead to wasted time. Imagine if each team spends three days preparing stories for their “assigned” features. They might then complain that two days of PI planning is wasted because they now have nothing to do.

The real waste lies in excessive pre-planning. What happens if the dependencies aren’t properly identified? Or if the business owner asks to reduce scope during PI planning due to a competitor’s actions? Valuable time would be wasted if the work that took time to plan is then descoped or deprioritized.

The magic of PI planning lies in the opportunity to learn together and collaborate closely.

PI Planning Tip 5: Visualize Features

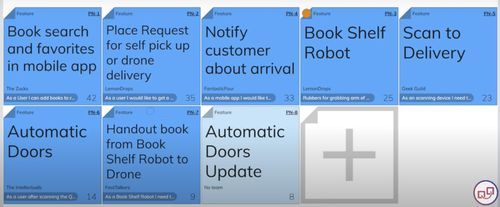

It’s important to share planning progress while teams are working. Therefore, it’s good practice to visualize features so they’re accessible to the entire organization.

There are different ways to visualize features based on whether you plan in-person or virtual.

In-person

To track progress and provide visibility, pin the features on a board using multiple instances and colors. For example, use two blue slips and one gray slip—all pinned over each other. When a team selects a feature, they remove the blue slips and write their names on the gray slip on the board.

This allows the RTE, Product Manager, or Business Owner to see the progress visually. The more gray slips, the greater the progress. It also helps the team members understand who is working on what and provides an overview. The blue slips can be kept in the team area, pinned to the iteration where the feature is finished, while the other blue slip goes to the ART planning board.

Virtual

You can accomplish this same goal without physical stickies if you’re working in a virtual environment.

piplanning.io has a color-coding system for the feature stickies. Once a feature has been assigned to a team, it will change color. It will also list the name of the team assigned to the work.

This is a simple and easy way to see which features have been planned and which haven’t.

PI Planning Tip 6: Take Care of Basic Needs

Maintaining wellbeing is crucial for a productive session.

As the saying goes, “A hungry bear makes no tricks,” or as participants in a German implementation training described it, “Ohne Mampf kein Kampf,” which translates to “No food, no fight.”

It’s impossible to concentrate when you’re basic needs aren’t being met. Food, hydration, and bathroom breaks are essential for productive PI planning.

Designate someone to organize snacks, coffee machines, water, brain food, and other refreshments to ensure everyone stays energized and focused during the sessions.

If you’re virtual, ensure there’s a lunch built into the schedule and breaks during long meetings, especially during the first day. Encourage people to take breaks as needed throughout.

Conclusion

Now that I have dozens of PI plannings under my belt, I can safely say these six tips will provide you with a strong event that provides the right amount of certainty to an uncertain and challenging, but rewarding, experience.

In addition to these tips, here are some SAFe PI planning resources.

- piplanning.io

- PI Planning page in SAFe Studio™

- How to Run a Hybrid PI Planning Event blog

- Facilitation Tips to Excel at the RTE Role blog

- SAFe PI Planning Toolkit

About Nikolaos Kaintantzis

Nikolaos has always been driven by improving people’s working lives. As a developer, he wrote UIs that made work easier. As an organizational developer, systemic coach, and SPCT, he has added skills to that ambitious endeavor. In partnership with organizations, he develops them so both the organization and all employees benefit.

Connect with Nikolaos on LinkedIn.

![PI Objective Formula

[Activity] + [Scope] so that [Beneficiary] have [User Value] to [Business Value]](https://staging.scaledagile.com/wp-content/uploads/2022/11/SAi_Blog_Header_PI_Formula_103122-1-1024x244.jpg)